Title

Picture This

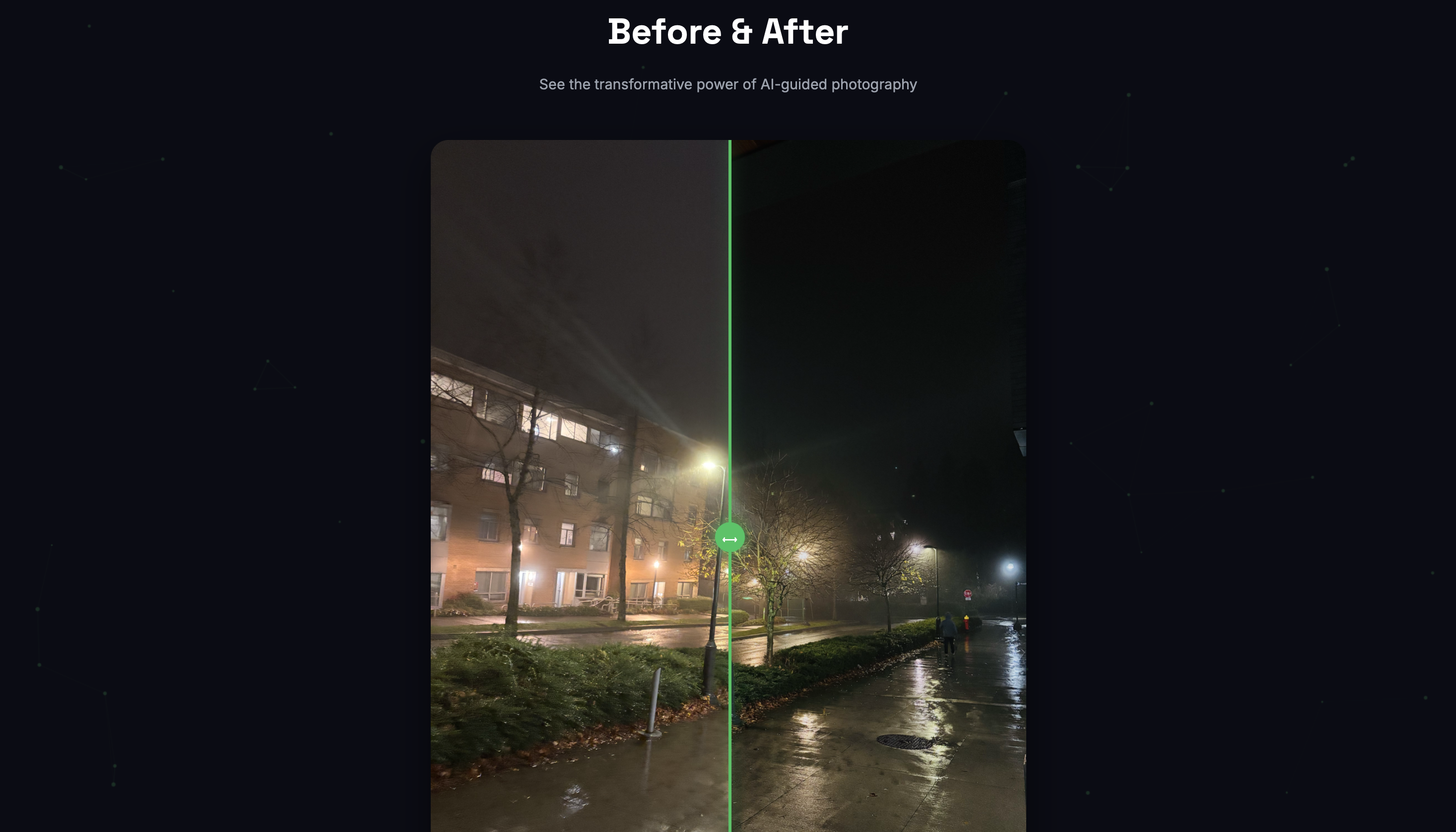

Gallery

What it does

AI camera coach built in 48 hours — 6th place at UBC Kickstart 2025.

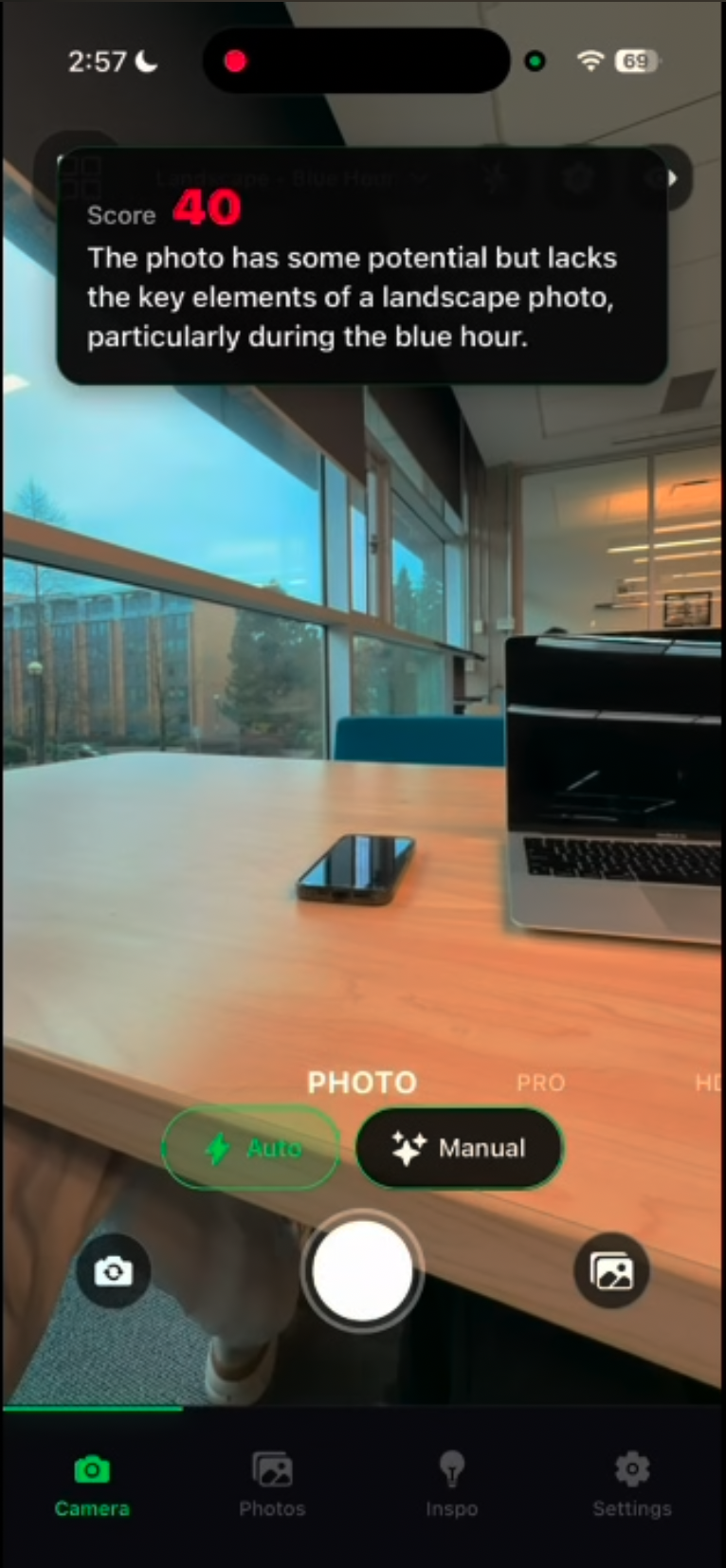

Points your phone at a scene and tells you how to shoot it better, in real time. Built in just 48 hours for UBC Kickstart, the app uses computer vision to analyze composition and framing as you move.

Core Features

- ✦Live camera feed analysis via AWS Bedrock

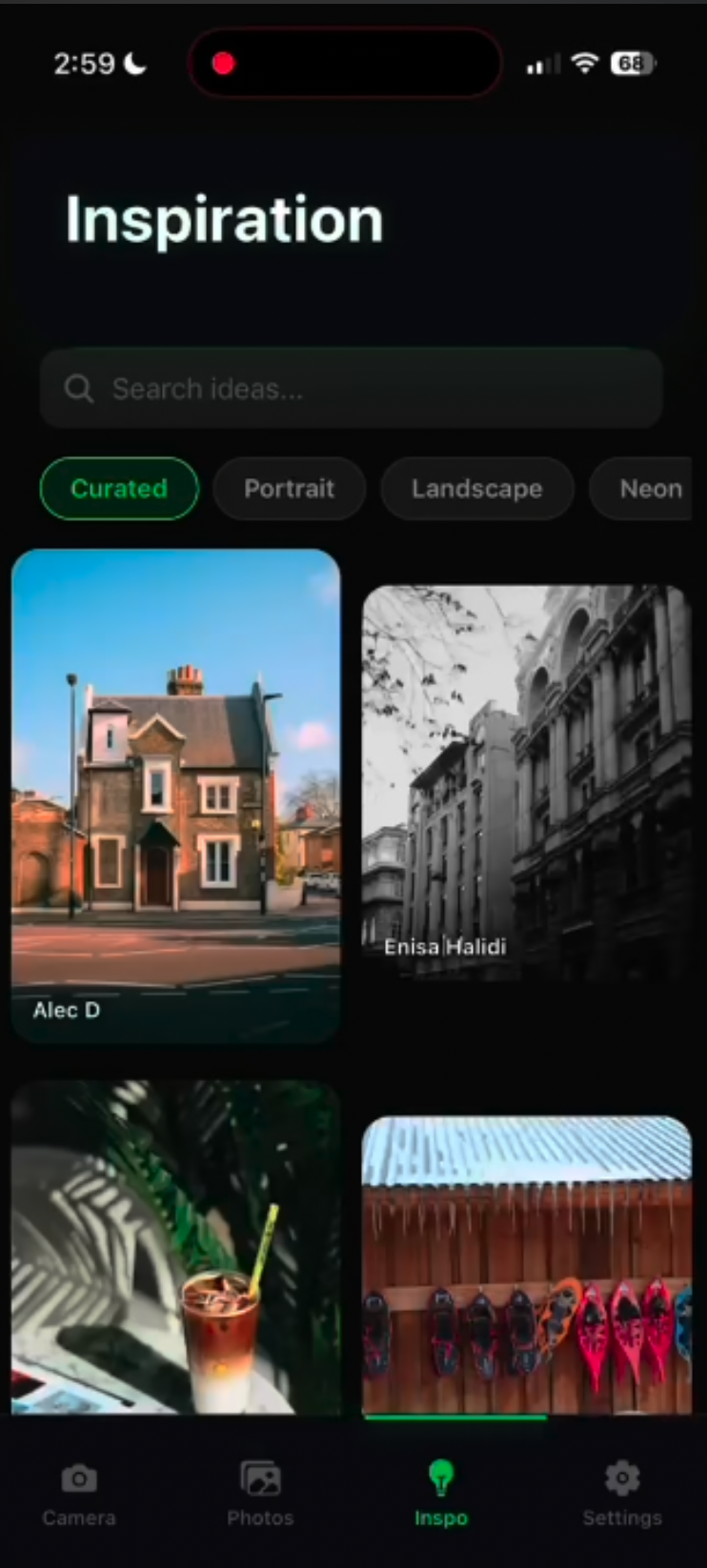

- ✦Personalized recommendations based on user preferences

- ✦Style matching from reference photos via Pexels API

- ✦Dynamic shooting instructions adapted to skill level

How we built it

The project started with a basic React Native camera view. We then moved to integrating Bedrock's Llama Vision model for scene analysis. The most iterative part was the 'style matching' layer, which required building a mapping between Pexels aesthetic metadata and AI-promptable shooting parameters (ISO, shutter, exposure compensation).

System Flow & Architecture

Integrated AWS Bedrock via a custom async inference pipeline to analyze live camera frames. Built a personalization layer that adapts coaching based on user skill level and environment. Leveraged React Native Skia for high-performance visual overlays and histograms without impacting the main thread.

Tech Stack